“Entropy pursuit”:

This is a Bayesian, model-based framework for determining what evidence to acquire from multiple scales and locations, and for coherently integrating the evidence by updating likelihoods. One component is a prior distribution on a binary interpretation vector; each bit represents a high-level scene attribute with widely varying degrees of specificity and resolution. The other component is a simple conditional data model for a corresponding family of learned binary classifiers. The classifiers are implemented sequentially and adaptively: the order of execution is determined online, during scene parsing, and is driven by removing as much uncertainty as possible about the overall scene interpretation given the evidence to date.

Theoretical foundations:

In the “twenty questions” approach to pattern recognition, the object of analysis is the computational process itself and the key structure is a hierarchy of tests or questions, one for each cell in a tree-structured, recursive partitioning of the space of hypotheses. Under a natural model for total computation and the joint distribution of tests, coarse-to-fine strategies are optimal whenever the cost-to-power ratio of the test at every cell is less than the sum of the cost-to-power ratios for the children of the cell. Under mild assumptions, good designs exhibit a steady progression from broad scope coupled with low power to high power coupled with dedication to specific explanations.

Turing Test for Vision Systems:

In computer vision, as in other fields of AI, the methods of evaluation largely define the scientific effort. Most current evaluations measure detection accuracy, emphasizing labeling regions according to objects from a pre-defined library. But detection is not the same as understanding. We are exploring a different evaluation system, in which a query engine prepares a kind of written test (“restricted Turing test”) that uses binary questions to probe a system's ability to identify attributes and relationships in addition to recognizing objects. The engine is stochastic, but prefers question streams that explore “story lines” about instantiated objects, under the statistical constraint that previous questions and their answers carry essentially no information about the correct answers to ensuing questions.

Confidence Sets for Fine-Grained Categorization:

Standard, fully automated systems for distinguishing among sub-categories of a more basic category, such as an object or shape class, report a single estimate of the category, or perhaps a ranked list. However, these algorithms have non-negligible error rates for most realistic scenarios, which limits their utility in practice, for example in allowing a user to identify a botanical species from a leaf image. Instead, we propose a semi-automated system which outputs a confidence set (CS) – a variable-length list of categories which contains the true one with high probability (e.g., a 99% CS). Performance is then measured by the expected size of the CS, reflecting the effort required for final identification by the user. For images of leaves with a natural backgrounds this method provides the first useful results at the expense of asking the user to initialize the process by identifying two or three specific landmarks.

Standard, fully automated systems for distinguishing among sub-categories of a more basic category, such as an object or shape class, report a single estimate of the category, or perhaps a ranked list. However, these algorithms have non-negligible error rates for most realistic scenarios, which limits their utility in practice, for example in allowing a user to identify a botanical species from a leaf image. Instead, we propose a semi-automated system which outputs a confidence set (CS) – a variable-length list of categories which contains the true one with high probability (e.g., a 99% CS). Performance is then measured by the expected size of the CS, reflecting the effort required for final identification by the user. For images of leaves with a natural backgrounds this method provides the first useful results at the expense of asking the user to initialize the process by identifying two or three specific landmarks.

Rank Discriminants for Predicting Cellular Phenotypes:

Motivated by technical and cultural barriers to translational research, we are investigating decision rules based on the ordering among the expression values, searching for characteristic perturbations in this ordering from one phenotype to another. “Rank-in-context” is a general framework for designing discriminants, including data-driven selection of the number and identity of the genes in the support ("context"). Given the context, the classifiers have a fixed decision boundary and do not require further access to the training data, a powerful form of complexity reduction. In particular, two-gene comparisons then provide parameter-free building blocks and may generate specific hypotheses for follow-up studies. Our work has shown that prediction rules based on a few such comparisons, even one ("top-scoring pair"), can be as sensitive and specific for detecting human cancers, differentiating subtypes, and predicting clinical outcomes as the far more complex classifiers commonly used in machine learning.

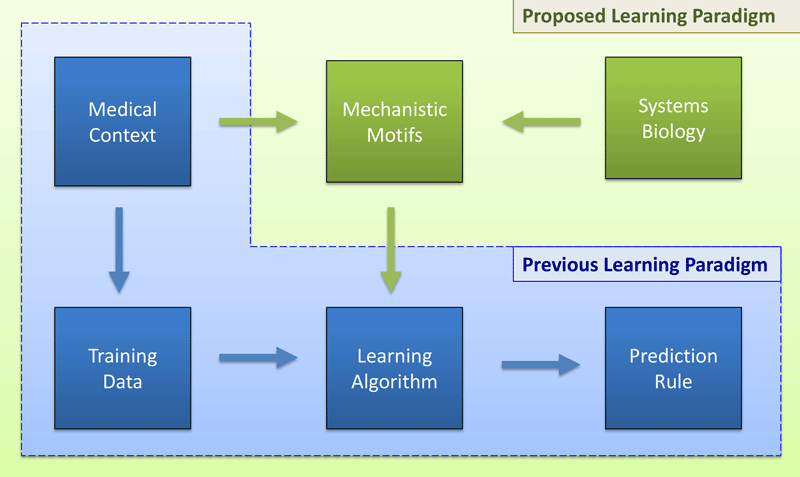

Hardwiring Biological Mechanism into Statistical Learning:

We are attempting to embed phenotype-dependent mechanisms specific to cancer pathogenesis and progression directly into the mathematical form of the decision rules. Two-gene expression comparisons can be seen as “biological switches” and combined into “switch boxes,” basing prediction on the number of on switches. In previous work these decision rules were learned from data using a largely unconstrained search of all possible switches. Our current work connects these switches to circuitry involving microRNAs (miR), transcription factors (TF) and their known targets, for example bi-stable feedback loops involving two miRNAs and two mRNAs. Such network motifs can be identified in signaling pathways and biochemical reactions intimately linked to cancer phenotypes.

We are attempting to embed phenotype-dependent mechanisms specific to cancer pathogenesis and progression directly into the mathematical form of the decision rules. Two-gene expression comparisons can be seen as “biological switches” and combined into “switch boxes,” basing prediction on the number of on switches. In previous work these decision rules were learned from data using a largely unconstrained search of all possible switches. Our current work connects these switches to circuitry involving microRNAs (miR), transcription factors (TF) and their known targets, for example bi-stable feedback loops involving two miRNAs and two mRNAs. Such network motifs can be identified in signaling pathways and biochemical reactions intimately linked to cancer phenotypes.

Coarse-to-Fine Testing for Genetic Associations:

In stark contrast with Mendelian traits, the genetic variants identified to date for complex traits collectively typically explain less than 10% of the phenotype variance. We are working on a new framework based on coarse-to-fine sequential testing, studying the trade-offs resulting from the introduction of carefully chosen biases about the distribution of active variants within genes and pathways. The top-down strategy we propose is essentially opposite to the static (variant-by-variant) and bottom-up (variants to genes and genes to pathways) methods which dominate the field. In contrast, ours is multi-scale and hierarchical, being coarse-to-fine in the resolution of the search units. So far, theoretical results support the basic underlying intuition that, provided there is even moderate clustering of active variants in coarse genetic units for any phenotype of interest, applying hypothesis tests sequentially, from pathways to genes to variants, can markedly increase overall power while maintaining tight control over false positives.